Conversational Search is the ability to talk with your memories. You can have conversations with your captured workflow context—ask specific questions, search through your memories, and filter by apps or time ranges to customize which memories you're talking with. All responses are powered by Long-Term Memory (LTM-2.7).

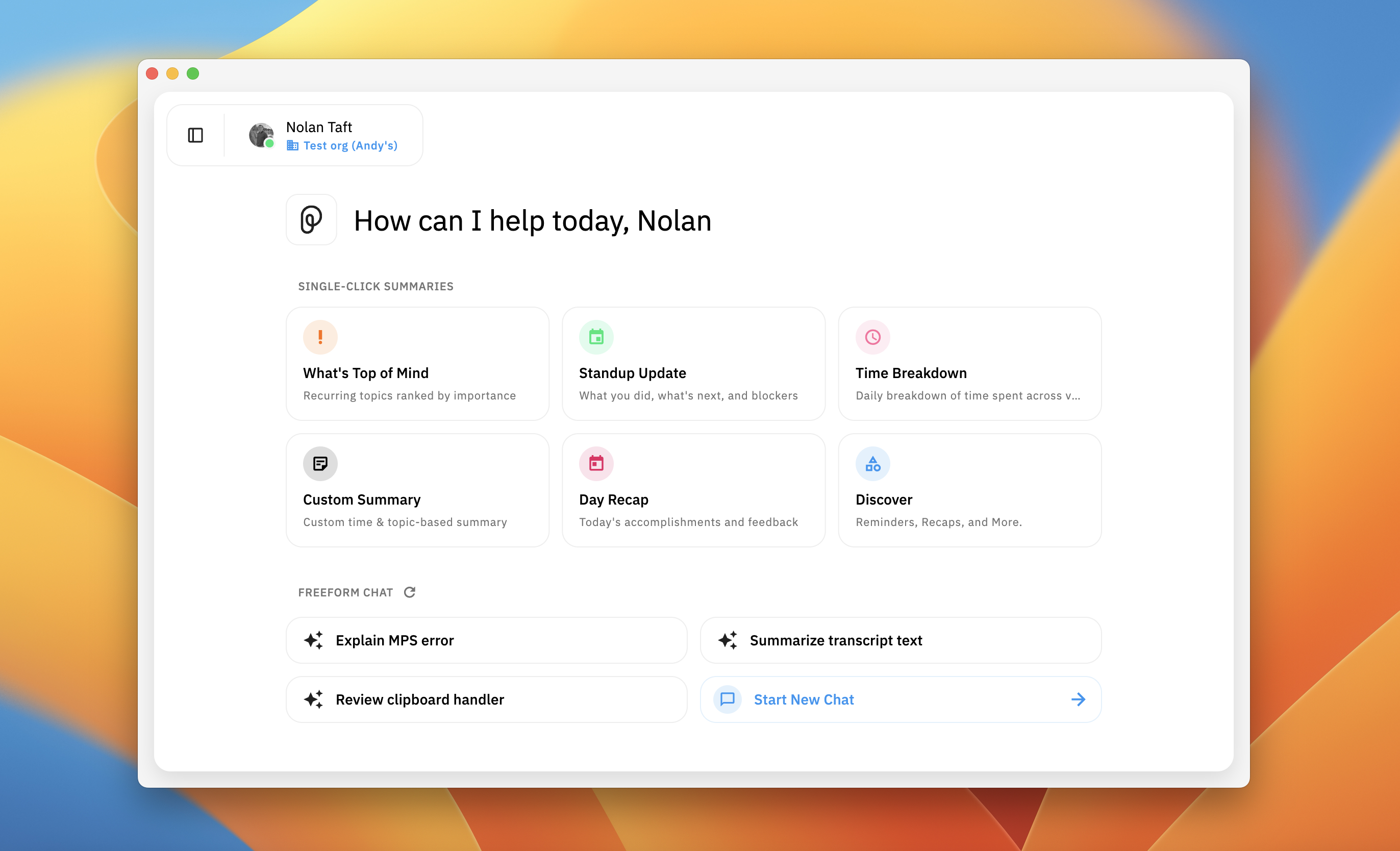

Full Conversational Search interface on homepage showing suggested prompts, chat input, model selector, and bottom toolbar

Talking with Your Memories

Have conversations with your captured workflow context. Ask specific questions about your past work, decisions, or activities.

Suggested Prompts

Suggested prompts appear as clickable buttons based on your recent workflow. Click any prompt to instantly start that conversation. Responses include Related Timeline Events cards showing which memories were used as sources.

Clicking a suggested prompt, showing the complete flow from click to response with Related Timeline Events cards

Asking Your Own Questions

Type your own questions to have conversations with your memories about specific topics or moments.

Example questions:

- "Why did we choose PostgreSQL over MySQL for the authentication project?"

- "What was the blocker I encountered last Tuesday afternoon?"

- "Find that React performance article I read last month"

- "What did Sarah and I discuss about the API redesign in Teams?"

- "Show me the code changes I made related to WebSocket connections"

Start New Chat button and input field with example question typed

Starting Fresh Chats

Use Start New Chat when you want to discuss a completely different topic. Starting a new chat ensures better results when switching topics since Conversational Search uses relevant memory context across the entire conversation.

Scoping a chat to one summary or event

You cannot attach local files or folders to Conversational Search. To focus the assistant on one specific memory (for example a generated summary), open that item in Pieces Timeline, open the three-dots menu (⋮) on the event, and choose Chat. See Chat from a summary. For a broader slice of memories, use Sources, Time Ranges, and Modalities below.

Filtering Your Searches

Filter by specific apps, time ranges, or modalities to narrow your search scope and get more focused answers.

Sources Filter

Limit searches to memories from specific applications. Use this when you want answers based only on specific apps.

Sources filter modal showing list of apps with checkboxes, with Chrome and VS Code selected

Time Ranges Filter

Focus searches on specific time periods. Use this when you need information from a specific period.

Time Ranges modal showing preset options and calendar view

Modalities Filter

Restrict searches to specific types of captured context—what you've seen, copied, said, or scheduled. Use this when you want answers grounded in a particular kind of memory rather than a particular app.

- **Vision** — what you've seen (screen context captured by LTM).

- **Clipboard** — what you've copied and pasted.

- **Audio** — what you've said and heard.

- **Google Calendar** — events and meeting context from connected calendars.

Some modalities depend on connected integrations or permissions (e.g. Google Calendar, microphone access for Audio). If a modality is unavailable, open Manage Connections to finish setup.

Combining Filters

Use Sources, Time Ranges, and Modalities together for precise queries—for example, "Chrome browsing from yesterday afternoon," or "anything I copied from Slack last week," or "meetings on my Google Calendar this month."

Working with Responses

Ask Follow-Up About Selected Text

Highlight any text in an AI response and click Ask follow-up to add it as context for your next message. The selected text appears in a context box above the chat input—type your follow-up question and send. The box can be dismissed with the X icon if you change your mind.

Response Toolbar Actions

All response actions are available in the toolbar below each response:

Model & time: A chip shows which model produced the answer and how long ago the response was generated.

Copy: Click the

clipboard iconto copy the entire response to your clipboardExport: Click the

export iconto download responses as PDF (for sharing/printing), Markdown (for editing), or Plain TextRegenerate: Click the

regenerate icon(circular arrow) to re-run the response with the same model or switch to a different one for comparisonConvert to Timeline Event: Click the

paper/document iconto save important responses—they'll appear in the memories sidebar for later referenceUse as Context: Click the

three-dot menu(⋮) and select "Use as Context" to add the response as context for follow-up questions

Response toolbar showing Copy, Export, Regenerate, Convert to Timeline Event, and More menu buttons labeled

Related Timeline Events

Cards appear below responses showing which source Timeline Events were used to generate the answer. Click any card to view the full Timeline Event details and verify where information came from.

Response with Related Timeline Events cards below showing memory titles and timestamps

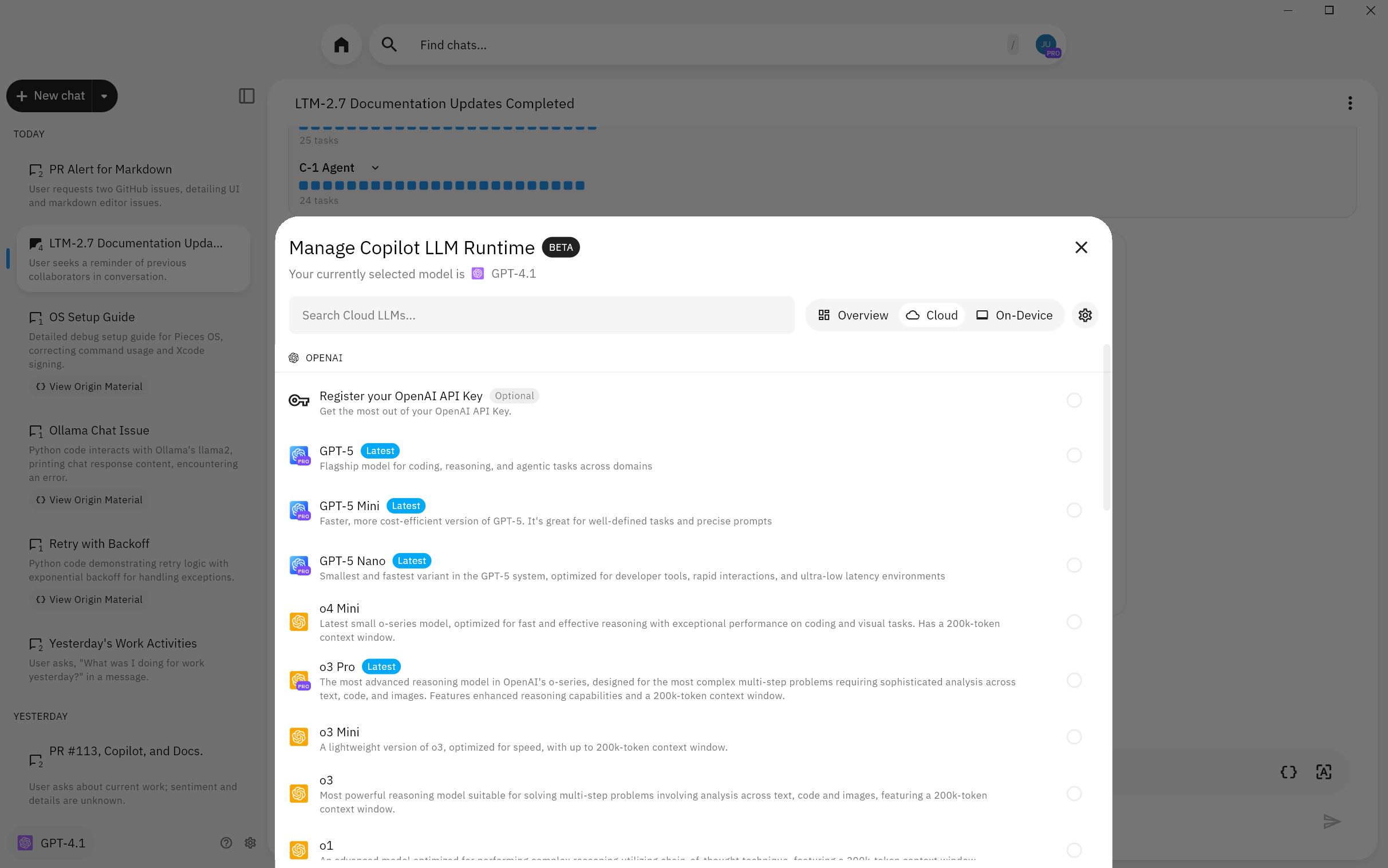

Model Selection

Switch between cloud and local models based on your needs. Use cloud models (Claude, GPT, Gemini) for faster responses and advanced reasoning. Use local models (o3, o1) for complete privacy and offline capability.

Model selector dropdown

LLM runtime modal

Click the active model control (for example the model name in the bottom toolbar) to open the LLM runtime modal. There you can enter API keys if needed, switch models, and open the full catalog of local and cloud-hosted models served through Pieces.

Cloud models from OpenAI, Anthropic, and Google (and others) are listed on the Cloud Models page. For on-device options and privacy, use local models; the full catalog for the Desktop App is in supported local and cloud models.

Browse and download local models

Open the LLM runtime modal, open All Models, then scroll to find local models. Select a model to download it on demand through PiecesOS; once downloaded, you can run it entirely on your device.

Reset a conversation

When you need a clean slate, use Chat Options on an active thread to reset the conversation so context and messages start fresh for that chat.

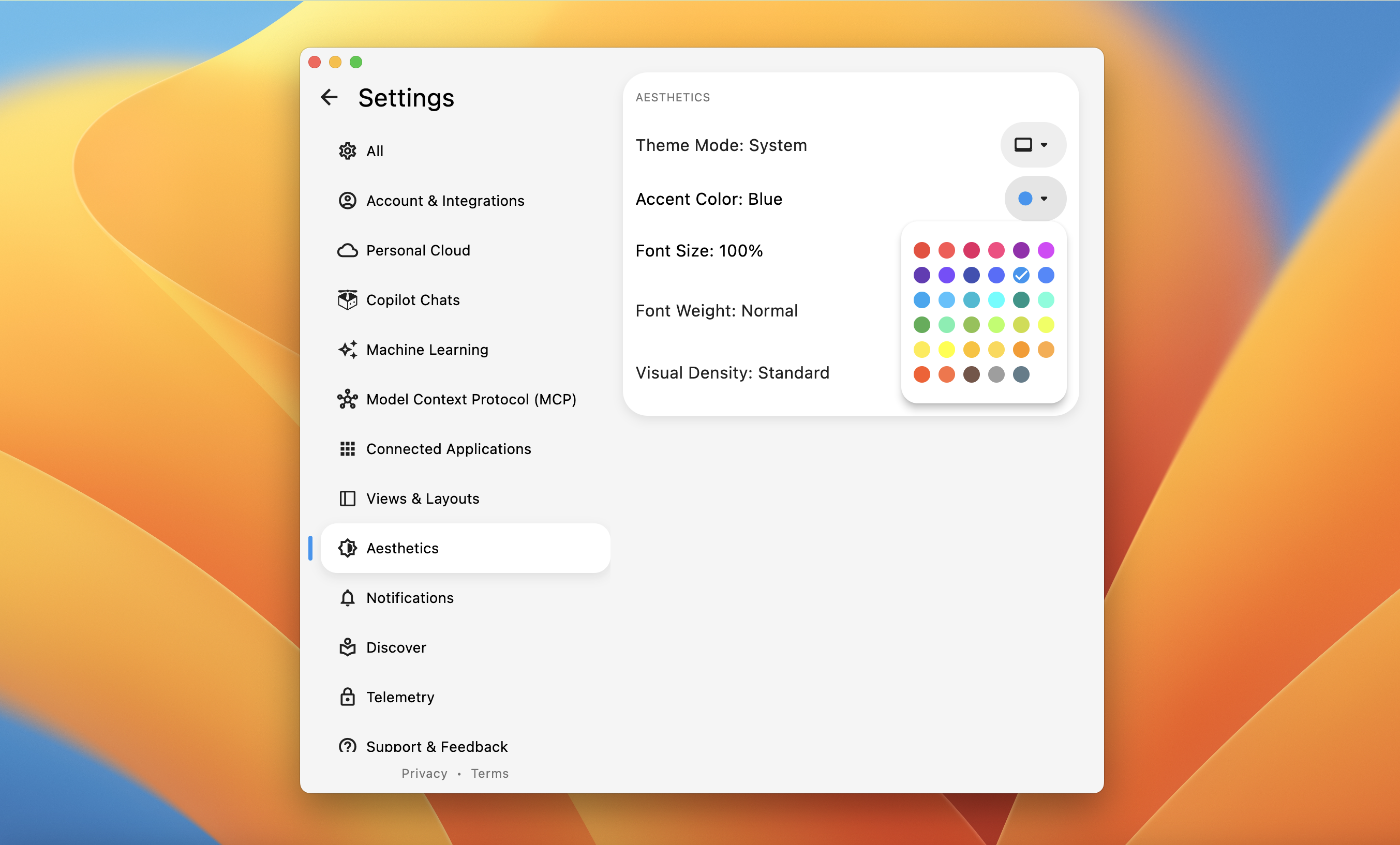

Chat appearance and defaults

In the LLM runtime area, open the Settings gear to set a chat accent color and choose whether LTM context is on by default for new chats.

You can also use cmd+shift+t (macOS) or ctrl+shift+t (Windows/Linux) to toggle the Desktop App Dark/Bright theme.

Asking Effective Questions

The more specific your questions, the better Conversational Search can find relevant memories and provide accurate answers.

Use Specific Keywords

Include unique keywords related to what you're looking for—project names, ticket numbers, package names, or specific topics.

Good: "What is the status of project Aurora?"

Bad: "What is the status of my project?"

Include Time Ranges

Specify when something happened to narrow down results. Conversational Search stores up to 9 months of memories.

Examples:

- "What decision did we make about the database schema last week?"

- "What were the plans I received in December?"

- "What was I debugging yesterday afternoon?"

Mention Source Applications

Reference specific apps to separate similar content across different sources.

Example: "What did Sarah and I discuss in Teams about the deployment?"

This separates Teams conversations from emails or document comments.

Combine Techniques

Mix keywords, time ranges, and applications for the most accurate results.

Example: "What is the URL for the Project Aurora document I discussed in Teams with Sarah last Thursday?"

This combines the keyword "Project Aurora," the application "Teams," the person "Sarah," and the time "last Thursday" to narrow down results precisely.

Use Filters Instead of Prompts

If you know the exact source app, time range, or type of memory you're looking for, use the Sources, Time Ranges, and Modalities filters instead of describing them in your prompt. Filters are more accurate than natural language time expressions.

LTM Context Toggle

Control whether Conversational Search includes Long-Term Memory context in your conversations. When enabled, your chats automatically draw from up to 9 months of captured workflow context.

Enabling or Disabling LTM Context

User profile menu showing LTM-2.7 hover menu with pause and turn off options

Viewing the Relevant Summaries Sidebar

After receiving a response, see exactly which Timeline Events were used to generate it.

Managing Your Chat History

All conversations automatically save to the memories sidebar, listed chronologically with other workflow activities. Click any saved chat to reopen it with full history and preserved filter settings. Use Focus Mode (the control at the top of the sidebar) to collapse the sidebar when you want to concentrate on the active thread.

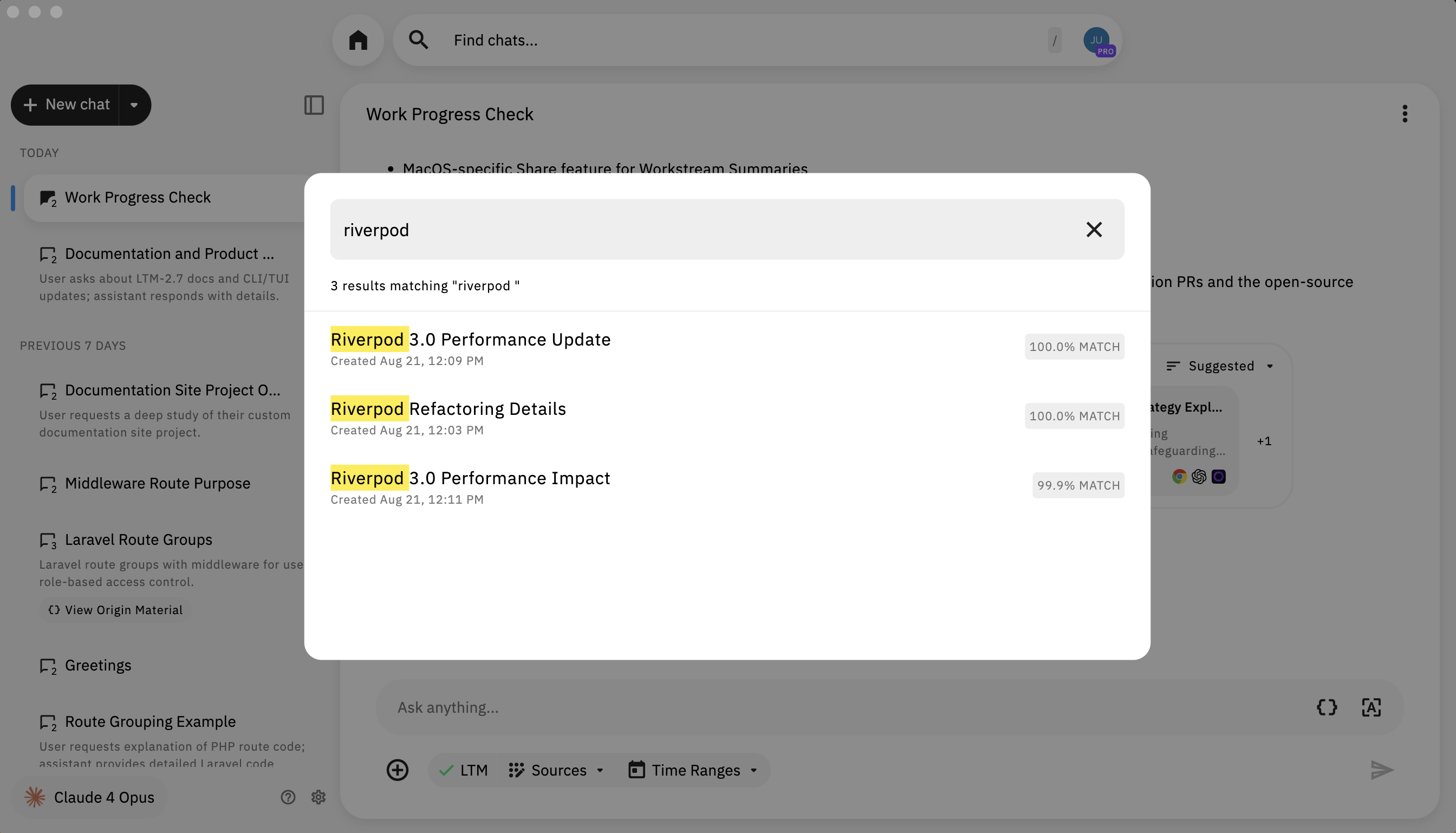

Find chats

Click the search field labeled Find chats… at the top of the Conversational Search view to open a modal of recent threads. Each row can show a relevance percentage (or an EXACT MATCH label). Type a query and press Enter / Return to run the search; matching text is highlighted in the results.

Input field, quick actions, and chat options

The input area supports rich prompts: paste code, drop an image, or ask a technical question. Use the quick-action (chat bubble) menu beside the field for shortcuts (for example toggling LTM-related options). On the right, tools can insert a fenced code block or extract code from a screenshot via the file picker.

To scope the whole chat to one captured summary or event, use Chat on that item’s three-dots menu in Timeline—not the input shortcuts. See Chat from a summary and Scoping a chat from Timeline.

Chat options menu

On an active thread (after you have sent messages), open the ⋮ menu at the top of the chat to Pin Chat, Refresh if generation stalls, or Delete the conversation. These options do not appear on a blank new chat.

Deep Study (Pieces Pro)

Deep Study uses your Long-Term Memory to produce sourced, timestamped research-style reports across apps, people, topics, and time ranges.

- Deep contextual recall across collaborators, apps, and sessions

- Recaps over days, weeks, or months

- Targeted queries with people, topics, projects, and dates

- Actionable insights with links to artifacts and suggested next steps

Ask naturally—for example: "Can you perform a deep study on what I've done for the last few days?"

How to activate Deep Study (Pro)

At the bottom of the chat, click Activate DeepStudy (marked PRO). Your next prompt runs the Deep Study workflow. Deep Study requires Long-Term Memory to be enabled.

What to expect

- Duration: Often on the order of 10–20 minutes depending on how much recent context is included.

- Progress: After you submit a deep-study prompt, you’ll see progress and a

Thinkingstate; expand sections to inspect intermediate agent steps.

Model used for Deep Study

Deep Study runs on a dedicated cloud model managed by Pieces (currently a Google model; subject to change). Your per-chat model choice applies to normal replies; Deep Study always uses its own runtime.

Example prompts

Perform a deep study of what I worked on last week related to WebSocket errors.

Tell me everything I did with [PERSON NAME] over the last 3 months.

What did I accomplish related to [TOPIC] in [MONTH]?

When was the last time I worked on [PROJECT/FILE]?

Next Steps

Now that you know how to use Conversational Search, learn how to start context-specific conversations directly from your workflow memories.